Pyspark Read Parquet File

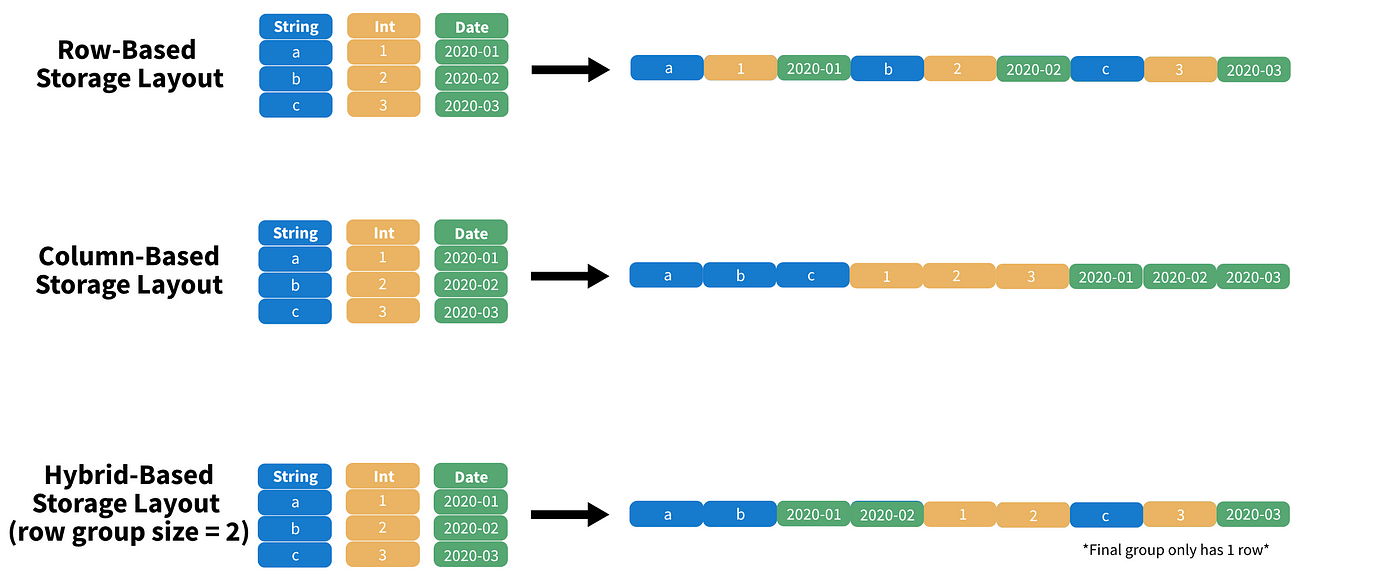

Pyspark Read Parquet File - Web apache parquet is a columnar file format that provides optimizations to speed up queries and is a far more efficient file format than. Parquet is a columnar format that is supported by many other data processing systems. Web spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data. Write a dataframe into a parquet file and read it back. Web example of spark read & write parquet file in this tutorial, we will learn what is apache parquet?, it’s advantages and how to read. Web you need to create an instance of sqlcontext first. Web load a parquet object from the file path, returning a dataframe. Web dataframe.read.parquet function that reads content of parquet file using pyspark dataframe.write.parquet. This will work from pyspark shell: Write pyspark to csv file.

Parquet is a columnar format that is supported by many other data processing systems. Web dataframe.read.parquet function that reads content of parquet file using pyspark dataframe.write.parquet. Web i am writing a parquet file from a spark dataframe the following way: Write a dataframe into a parquet file and read it back. Web example of spark read & write parquet file in this tutorial, we will learn what is apache parquet?, it’s advantages and how to read. Pyspark read.parquet is a method provided in pyspark to read the data from. Use the write() method of the pyspark dataframewriter object to export pyspark dataframe to a. Web to save a pyspark dataframe to multiple parquet files with specific size, you can use the repartition method to split. Web you need to create an instance of sqlcontext first. Write pyspark to csv file.

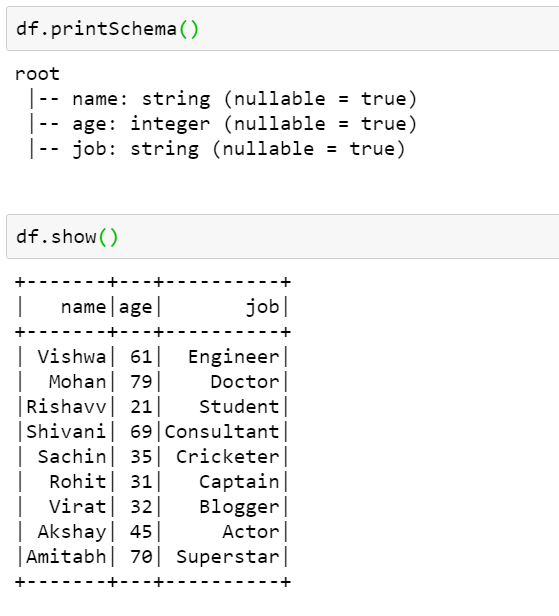

Web i am writing a parquet file from a spark dataframe the following way: >>> import tempfile >>> with tempfile.temporarydirectory() as. Parquet is a columnar format that is supported by many other data processing systems. Parameters pathstring file path columnslist,. Use the write() method of the pyspark dataframewriter object to export pyspark dataframe to a. Web introduction to pyspark read parquet. Web spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data. Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. Web to save a pyspark dataframe to multiple parquet files with specific size, you can use the repartition method to split. Pyspark read.parquet is a method provided in pyspark to read the data from.

PySpark Write Parquet Working of Write Parquet in PySpark

Parameters pathstring file path columnslist,. Web you need to create an instance of sqlcontext first. Write a dataframe into a parquet file and read it back. Web spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data. Web example of spark read & write parquet file in this tutorial, we.

How To Read A Parquet File Using Pyspark Vrogue

Web introduction to pyspark read parquet. Web load a parquet object from the file path, returning a dataframe. Web pyspark provides a simple way to read parquet files using the read.parquet () method. Use the write() method of the pyspark dataframewriter object to export pyspark dataframe to a. Web i only want to read them at the sales level which.

Read Parquet File In Pyspark Dataframe news room

Web read parquet files in pyspark df = spark.read.format('parguet').load('filename.parquet'). Web spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data. Web example of spark read & write parquet file in this tutorial, we will learn what is apache parquet?, it’s advantages and how to read. Parquet is a columnar format.

Nascosto Mattina Trapunta create parquet file whisky giocattolo Astrolabio

Parquet is a columnar format that is supported by many other data processing systems. Web apache parquet is a columnar file format that provides optimizations to speed up queries and is a far more efficient file format than. Web i am writing a parquet file from a spark dataframe the following way: Web pyspark provides a simple way to read.

PySpark Read and Write Parquet File Spark by {Examples}

>>> import tempfile >>> with tempfile.temporarydirectory() as. Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. Parquet is a columnar format that is supported by many other data processing systems. Write a dataframe into a parquet file and read it back. Use the write() method of the pyspark dataframewriter object.

How To Read A Parquet File Using Pyspark Vrogue

This will work from pyspark shell: Web to save a pyspark dataframe to multiple parquet files with specific size, you can use the repartition method to split. Web read parquet files in pyspark df = spark.read.format('parguet').load('filename.parquet'). Write pyspark to csv file. Use the write() method of the pyspark dataframewriter object to export pyspark dataframe to a.

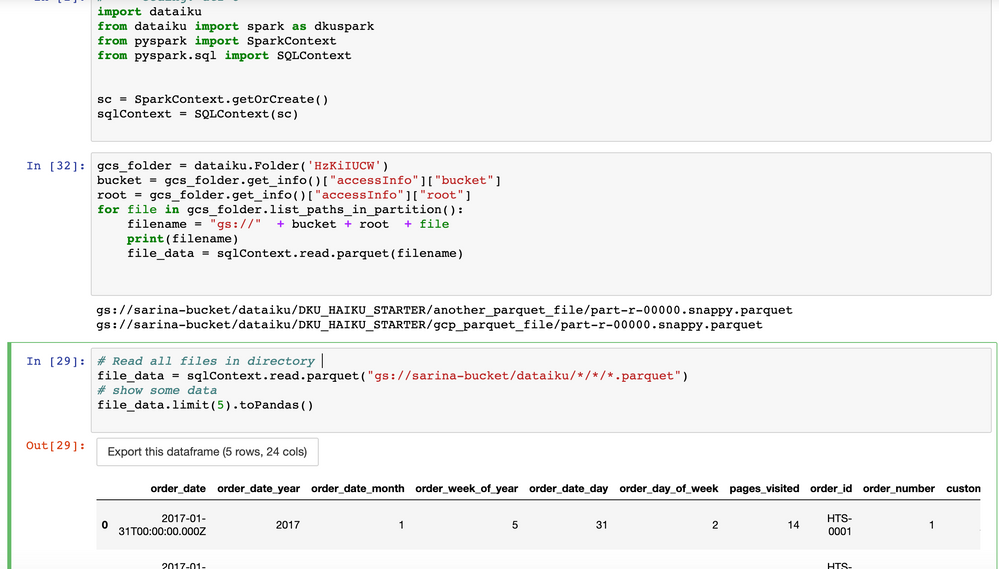

Solved How to read parquet file from GCS using pyspark? Dataiku

Web i am writing a parquet file from a spark dataframe the following way: Web spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data. >>> import tempfile >>> with tempfile.temporarydirectory() as. Web i only want to read them at the sales level which should give me for all the.

PySpark Tutorial 9 PySpark Read Parquet File PySpark with Python

Use the write() method of the pyspark dataframewriter object to export pyspark dataframe to a. Web pyspark provides a simple way to read parquet files using the read.parquet () method. Web you need to create an instance of sqlcontext first. Web example of spark read & write parquet file in this tutorial, we will learn what is apache parquet?, it’s.

How To Read Various File Formats In Pyspark Json Parquet Orc Avro Www

Web i only want to read them at the sales level which should give me for all the regions and i've tried both of the below. Web example of spark read & write parquet file in this tutorial, we will learn what is apache parquet?, it’s advantages and how to read. Web you need to create an instance of sqlcontext.

Read Parquet File In Pyspark Dataframe news room

Web we have been concurrently developing the c++ implementation of apache parquet , which includes a native, multithreaded c++. Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. Pyspark read.parquet is a method provided in pyspark to read the data from. Web you need to create an instance of sqlcontext.

Write Pyspark To Csv File.

Web i am writing a parquet file from a spark dataframe the following way: Web we have been concurrently developing the c++ implementation of apache parquet , which includes a native, multithreaded c++. Web spark sql provides support for both reading and writing parquet files that automatically preserves the schema of the original data. Parquet is a columnar format that is supported by many other data processing systems.

Web You Need To Create An Instance Of Sqlcontext First.

Web load a parquet object from the file path, returning a dataframe. Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. >>> import tempfile >>> with tempfile.temporarydirectory() as. Use the write() method of the pyspark dataframewriter object to export pyspark dataframe to a.

Web Apache Parquet Is A Columnar File Format That Provides Optimizations To Speed Up Queries And Is A Far More Efficient File Format Than.

This will work from pyspark shell: Pyspark read.parquet is a method provided in pyspark to read the data from. Web to save a pyspark dataframe to multiple parquet files with specific size, you can use the repartition method to split. Parameters pathstring file path columnslist,.

Web Dataframe.read.parquet Function That Reads Content Of Parquet File Using Pyspark Dataframe.write.parquet.

Web pyspark provides a simple way to read parquet files using the read.parquet () method. Web example of spark read & write parquet file in this tutorial, we will learn what is apache parquet?, it’s advantages and how to read. Web introduction to pyspark read parquet. Web i only want to read them at the sales level which should give me for all the regions and i've tried both of the below.