Pyspark Read From S3

Pyspark Read From S3 - We can finally load in our data from s3 into a spark dataframe, as below. Pyspark supports various file formats such as csv, json,. Web read csv from s3 as spark dataframe using pyspark (spark 2.4) ask question asked 3 years, 10 months ago. Note that our.json file is a. Web step 1 first, we need to make sure the hadoop aws package is available when we load spark: Web spark read json file from amazon s3. Web to read data on s3 to a local pyspark dataframe using temporary security credentials, you need to: Now, we can use the spark.read.text () function to read our text file: Web and that’s it, we’re done! Interface used to load a dataframe from external storage.

Interface used to load a dataframe from external storage. Web now that pyspark is set up, you can read the file from s3. Web this code snippet provides an example of reading parquet files located in s3 buckets on aws (amazon web services). Web if you need to read your files in s3 bucket you need only do few steps: Read the data from s3 to local pyspark dataframe. Web feb 1, 2021 the objective of this article is to build an understanding of basic read and write operations on amazon. Web read csv from s3 as spark dataframe using pyspark (spark 2.4) ask question asked 3 years, 10 months ago. Read the text file from s3. Web how to access s3 from pyspark apr 22, 2019 running pyspark i assume that you have installed pyspak. To read json file from amazon s3 and create a dataframe, you can use either.

Read the text file from s3. Interface used to load a dataframe from external storage. Note that our.json file is a. Web to read data on s3 to a local pyspark dataframe using temporary security credentials, you need to: Now that we understand the benefits of. Web this code snippet provides an example of reading parquet files located in s3 buckets on aws (amazon web services). Now, we can use the spark.read.text () function to read our text file: Web read csv from s3 as spark dataframe using pyspark (spark 2.4) ask question asked 3 years, 10 months ago. Web now that pyspark is set up, you can read the file from s3. Interface used to load a dataframe from external storage.

Array Pyspark? The 15 New Answer

Pyspark supports various file formats such as csv, json,. Now that we understand the benefits of. Web how to access s3 from pyspark apr 22, 2019 running pyspark i assume that you have installed pyspak. Web if you need to read your files in s3 bucket you need only do few steps: Web spark read json file from amazon s3.

Spark SQL Architecture Sql, Spark, Apache spark

Now, we can use the spark.read.text () function to read our text file: Now that we understand the benefits of. It’s time to get our.json data! We can finally load in our data from s3 into a spark dataframe, as below. Web if you need to read your files in s3 bucket you need only do few steps:

PySpark Create DataFrame with Examples Spark by {Examples}

Web how to access s3 from pyspark apr 22, 2019 running pyspark i assume that you have installed pyspak. Read the text file from s3. Web spark read json file from amazon s3. Note that our.json file is a. Interface used to load a dataframe from external storage.

PySpark Tutorial24 How Spark read and writes the data on AWS S3

To read json file from amazon s3 and create a dataframe, you can use either. Pyspark supports various file formats such as csv, json,. Now, we can use the spark.read.text () function to read our text file: Web how to access s3 from pyspark apr 22, 2019 running pyspark i assume that you have installed pyspak. Web step 1 first,.

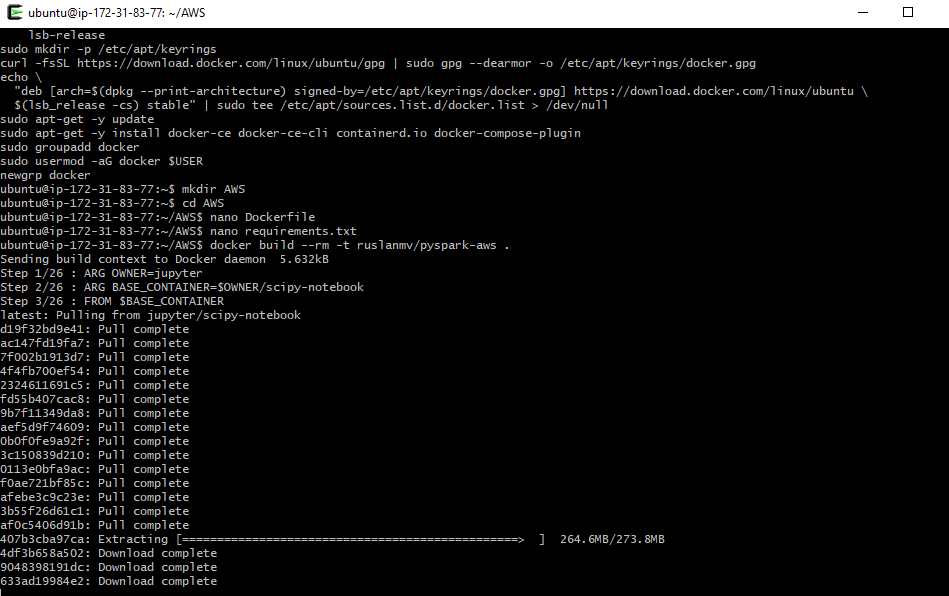

How to read and write files from S3 bucket with PySpark in a Docker

Pyspark supports various file formats such as csv, json,. Read the text file from s3. Web now that pyspark is set up, you can read the file from s3. Web spark sql provides spark.read.csv (path) to read a csv file from amazon s3, local file system, hdfs, and many other data. To read json file from amazon s3 and create.

Read files from Google Cloud Storage Bucket using local PySpark and

Now that we understand the benefits of. It’s time to get our.json data! Read the text file from s3. Web spark read json file from amazon s3. If you have access to the system that creates these files, the simplest way to approach.

How to read and write files from S3 bucket with PySpark in a Docker

Note that our.json file is a. Web and that’s it, we’re done! To read json file from amazon s3 and create a dataframe, you can use either. Web if you need to read your files in s3 bucket you need only do few steps: We can finally load in our data from s3 into a spark dataframe, as below.

PySpark Read JSON file into DataFrame Cooding Dessign

Web and that’s it, we’re done! We can finally load in our data from s3 into a spark dataframe, as below. Web how to access s3 from pyspark apr 22, 2019 running pyspark i assume that you have installed pyspak. Interface used to load a dataframe from external storage. It’s time to get our.json data!

PySpark Read CSV Muliple Options for Reading and Writing Data Frame

Web spark read json file from amazon s3. Web to read data on s3 to a local pyspark dataframe using temporary security credentials, you need to: To read json file from amazon s3 and create a dataframe, you can use either. Web how to access s3 from pyspark apr 22, 2019 running pyspark i assume that you have installed pyspak..

apache spark PySpark How to read back a Bucketed table written to S3

If you have access to the system that creates these files, the simplest way to approach. Web step 1 first, we need to make sure the hadoop aws package is available when we load spark: To read json file from amazon s3 and create a dataframe, you can use either. Pyspark supports various file formats such as csv, json,. Now.

Web If You Need To Read Your Files In S3 Bucket You Need Only Do Few Steps:

Web and that’s it, we’re done! If you have access to the system that creates these files, the simplest way to approach. Note that our.json file is a. We can finally load in our data from s3 into a spark dataframe, as below.

It’s Time To Get Our.json Data!

Web now that pyspark is set up, you can read the file from s3. To read json file from amazon s3 and create a dataframe, you can use either. Web this code snippet provides an example of reading parquet files located in s3 buckets on aws (amazon web services). Web to read data on s3 to a local pyspark dataframe using temporary security credentials, you need to:

Web Step 1 First, We Need To Make Sure The Hadoop Aws Package Is Available When We Load Spark:

Read the text file from s3. Now, we can use the spark.read.text () function to read our text file: Web read csv from s3 as spark dataframe using pyspark (spark 2.4) ask question asked 3 years, 10 months ago. Interface used to load a dataframe from external storage.

Read The Data From S3 To Local Pyspark Dataframe.

Web how to access s3 from pyspark apr 22, 2019 running pyspark i assume that you have installed pyspak. Web feb 1, 2021 the objective of this article is to build an understanding of basic read and write operations on amazon. Web spark sql provides spark.read.csv (path) to read a csv file from amazon s3, local file system, hdfs, and many other data. Web spark read json file from amazon s3.